Microsoft Agent Framework Part 3 Lands: .NET Agents Grow Up (and Get Memory)

TL;DR:

On May 4, 2026, Microsoft shipped Part 3 of the Microsoft Agent Framework for .NET, turning last year’s “cool demo agents” into something you can plausibly run in production. The big themes: stateful memory, tool orchestration, and graph‑based workflows—all first‑class in C#. If you’re building AI features on Azure with .NET 10, this release materially changes how you should structure agentic systems.

What actually shipped (and why it matters)

The May 4 post, Microsoft Agent Framework – Building Blocks for AI Part 3, is not a marketing recap—it’s a concrete SDK evolution aimed squarely at production workloads (devblogs.microsoft.com).

Part 3 adds three things engineers have been duct‑taping together:

-

Persistent agent memory

Agents can now carry structured memory across turns and tasks, rather than relying on oversized prompts or fragile in‑prompt summaries. -

Tool-first execution

Tools are no longer an afterthought. Agents reason about tools and invoke them deterministically—think “planner + executor,” not “LLM with vibes.” -

Graph-based workflows

Instead of linear chains, you can define agent flows as graphs. Branching, retries, and fallbacks are explicit, inspectable, and testable.

In short: this release nudges .NET agents from chatbots toward systems.

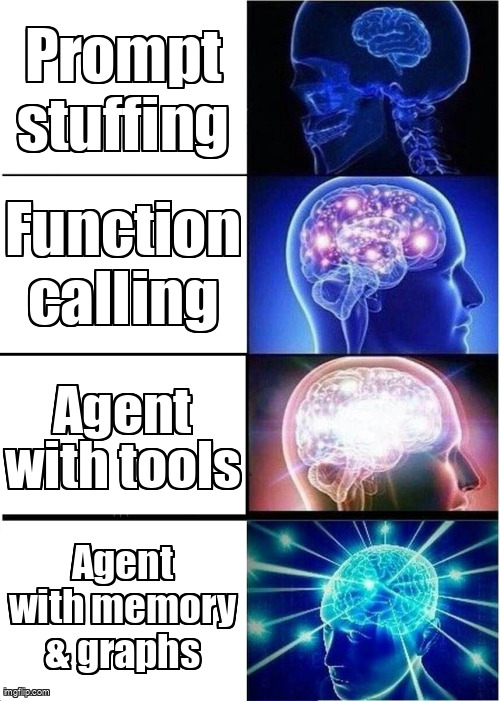

A quick mental model

Microsoft’s own framing is useful here:

If MEAI is like having a conversation with a colleague, an agent is like handing them a to‑do list and letting them figure it out. (devblogs.microsoft.com)

Part 3 is about finally giving that colleague:

- a notebook (memory),

- a toolbox (first‑class tools),

- and a flowchart (graphs).

What changes for .NET engineers

1. Memory stops being a prompt problem

Before Part 3, “agent memory” usually meant:

- shoving more context into the prompt, or

- rolling your own vector store glue.

Now, memory is an SDK concept. You still choose the backing store (Cosmos DB, Redis, etc.), but the shape of memory is standardized.

Implication:

You can version, test, and reason about memory the same way you do application state—without inventing yet another abstraction.

2. Tool orchestration becomes deterministic

Tool calls are no longer “LLM decides, good luck.” The framework formalizes:

- tool schemas,

- invocation boundaries,

- and result handling.

That matters for cost and latency:

- Fewer accidental tool calls.

- Easier to short‑circuit expensive operations.

- Cleaner retries when Azure services hiccup.

This aligns with broader guidance Microsoft has been pushing in recent agent samples, including the ConferencePulse app built on the same composable stack (devblogs.microsoft.com).

3. Graph workflows fit Azure-native thinking

Graph-based agent flows map cleanly to how Azure engineers already think:

- retries,

- fan‑out/fan‑in,

- compensating actions.

If you’ve ever squinted at an LLM chain and thought “this should be a state machine”, congratulations—Microsoft agrees.

This also pairs well with Agent 365, which is adding centralized governance and lifecycle management for agents across environments (techcommunity.microsoft.com).

A minimal C# sketch

Nothing exotic—just more explicit structure:

var agent = new AgentBuilder()

.WithMemory(new CosmosAgentMemory(cosmosClient))

.WithTool(new SearchTool())

.WithTool(new OrderApiTool())

.WithWorkflow(graph =>

{

graph.StartWith("Plan")

.Then("Search")

.If("FoundResult", "ExecuteOrder")

.Else("AskUser");

})

.Build();

The important part isn’t the syntax—it’s that planning, memory, and execution are no longer implicit.

Cost, latency, and operational reality

A few pragmatic takeaways:

-

Cost:

Structured memory and graphs reduce token bloat. Expect fewer “runaway” prompts. -

Latency:

Deterministic tool execution cuts unnecessary round‑trips, especially when paired with Azure-hosted tools. -

Observability:

Graph nodes give you natural logging and metrics points—something raw prompt chains never did well.

This is consistent with Microsoft’s recent push toward governable, observable agent systems rather than free‑form prompt magic (devblogs.microsoft.com).

Should you adopt now?

If you’re:

- already on .NET 10, and

- shipping AI features that do work (not just chat),

then yes—this is worth piloting.

If you’re still experimenting, Part 3 is also a strong signal of where Microsoft wants the ecosystem to go: fewer prompts, more structure.

Further reading

- https://devblogs.microsoft.com/dotnet/microsoft-agent-framework-building-blocks-for-ai-part-3/

- https://devblogs.microsoft.com/dotnet/building-ai-conference-app-dotnet-composable-stack

- https://techcommunity.microsoft.com/blog/agent-365-blog/what%E2%80%99s-new-in-agent-365-may-2026/

- https://devblogs.microsoft.com/dotnet/generative-ai-for-beginners-dotnet-version-2-on-dotnet-10/